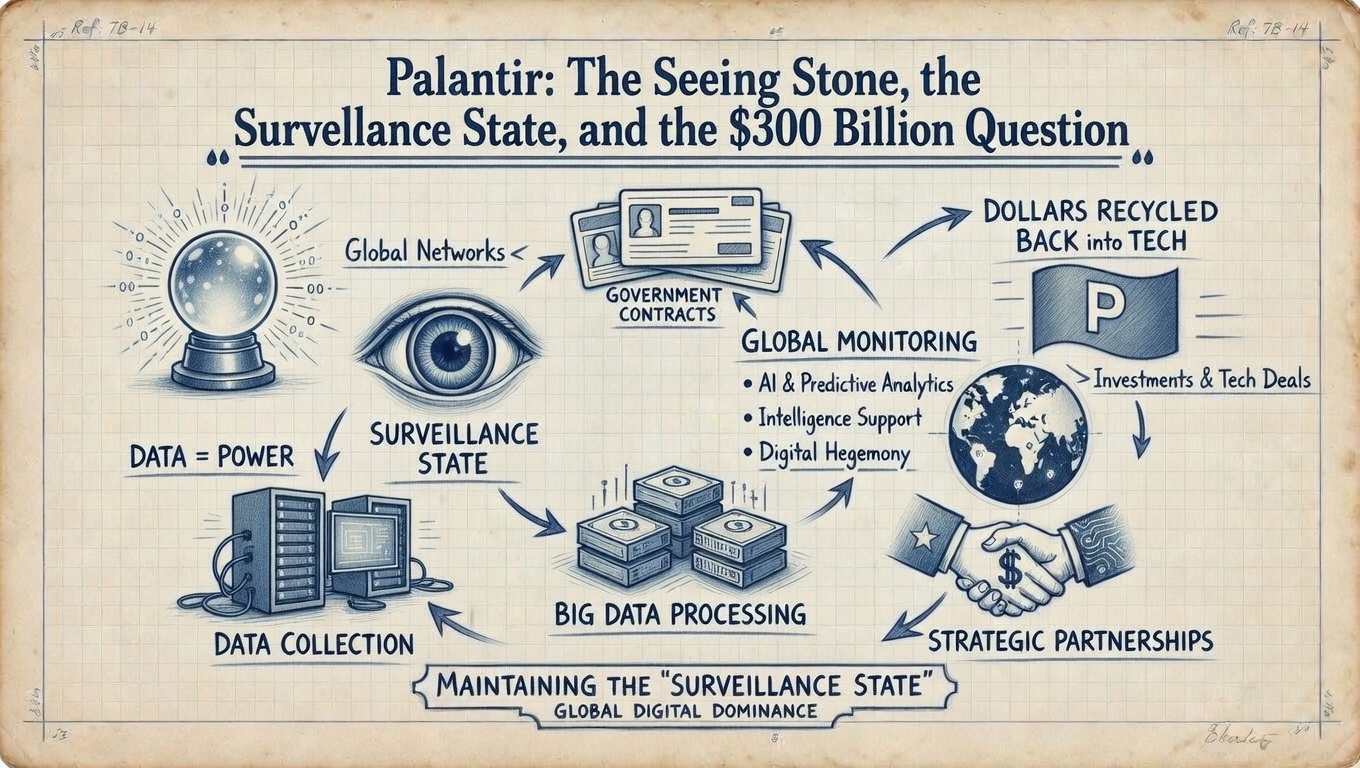

There is a company that built its first product in collaboration with the CIA, helped track Osama bin Laden, powers battlefield kill-chain decisions in active war zones, is now building a master database of American citizens for the Department of Homeland Security, and trades on the stock market at roughly 240 times earnings.

Its investors call it the most important software company in the world. Its critics call it the infrastructure of the surveillance state. Both are probably right. And that tension — between genuinely transformative technology and deeply troubling application — is exactly why understanding Palantir is not optional for any serious investor or citizen in 2025.

This article will tell you what Palantir actually does, why the product is harder to replace than most people realize, what the valuation actually implies, who the real competition is, and why the civil liberties questions are not separate from the investment thesis — they are central to it.

Part I: The Origin — Born From 9/11, Funded by the CIA

The founding story tells you everything about the company's DNA.

On September 11, 2001, Peter Thiel — co-founder of PayPal — began wondering if the pattern-recognition algorithms PayPal used to detect fraudulent transactions could be applied to trace the flow of money used by terrorists. That question became the seed of Palantir.

Palantir was founded in 2003 by Peter Thiel, Stephen Cohen, Joe Lonsdale, Alex Karp, and Nathan Gettings. Thiel funded the initial years almost entirely himself — reportedly over $30 million of personal capital — because traditional venture capital wanted nothing to do with it. According to Karp, a Kleiner Perkins executive told the founders their company was doomed to fail.

Palantir has never masked its ambitions, in particular the desire to sell its services to the U.S. government. The CIA itself was an early investor through In-Q-Tel, the agency's venture capital branch. But the relationship went further than a check. Leaked NSA documents revealed that Palantir's software was created through an iterative collaboration between Palantir computer scientists and analysts from various intelligence agencies over the course of nearly three years. This was not a startup that sold software to the government. It was a startup that built its product with the government, from inside the intelligence apparatus, to serve intelligence purposes.

Thiel named the company after the mythical "seeing stones" in J.R.R. Tolkien's Lord of the Rings — artifacts that allowed their holders to see across great distances. The metaphor is precise. And in Tolkien's books, it is worth remembering, the seeing stones are also instruments of manipulation — powerful enough to reveal truth, dangerous enough to corrupt those who wield them.

Alex Karp — who holds a doctorate in neoclassical social theory and describes himself as a progressive who supported Kamala Harris in 2024 — runs a company that is simultaneously the most ideologically interesting and the most morally complex in American technology. In 2020, the company moved its headquarters from Palo Alto to Denver, citing increasing intolerance and monoculture in Silicon Valley. Karp has argued publicly that Silicon Valley's aversion to ideological confrontation has stifled meaningful innovation. He is not wrong about the diagnosis. The cure he built is a separate question.

Part II: What Palantir Actually Does — The Ontology Explained

Most descriptions of Palantir call it "big data analytics" or "AI software." These descriptions are technically accurate and practically useless. They tell you nothing about why the product is hard to replace or why customers describe it in almost religious terms.

The real product is the Ontology — and understanding it is understanding the company.

The Problem Every Large Organization Has

Every large organization — a military, a hospital, a bank, a manufacturer — is drowning in data. Thousands of databases, systems, spreadsheets, sensors, logs. The problem is not that they lack data. The problem is that the data is fragmented, inconsistent, and disconnected from the actual decisions being made.

A military analyst trying to track a threat network is pulling data from signals intelligence, human intelligence, satellite imagery, financial records, and communications intercepts. These live in separate systems that don't talk to each other, use different terminology, and were built by different contractors for different purposes. The analyst spends most of their time assembling the picture — not analyzing it.

A hospital trying to understand patient outcomes faces the same structural problem. An oil company optimizing a refinery. A bank detecting systemic fraud in real time. The data exists. The synthesis does not.

What the Ontology Solves

The Palantir Ontology is designed to represent the decisions in an enterprise, not simply the data. The prime directive of every organization is to execute the best possible decisions, often in real time, while contending with internal and external conditions constantly in flux. Traditional data architectures do not capture the reasoning that goes into decision-making or the action that results, and therefore limit both learning and the incorporation of AI.

In plain language: instead of giving you tables of data, Palantir builds a living digital model of your organization. Not "here is a database of aircraft" — but "here is Aircraft #4417, connected to its maintenance history, its current location, its assigned crew, the weather at its destination, and the three other aircraft it is operationally linked to." Every entity in the organization — a person, an asset, an event, a transaction — becomes an object with properties, relationships, and real-time state.

In defense and intelligence contexts, the ontology tracks entities like Person, Organization, Event, and Location with links like "travelsTo" and "communicatesWith." In manufacturing, it models Factory → Production Line → Machine → Part with real-time sensor telemetry attached. In finance, it connects Counterparty → Trade → Risk Factor with automated approval actions. In healthcare, Patient → Encounter → Diagnosis → Medication with strict compliance controls.

This is why one data engineer reportedly described it: "I spent two years building data models in Databricks. Two weeks in the Ontology and I threw all of that away."

AIP — The AI Layer That Changes Everything

The Ontology was already a powerful product before AI. AIP — the Artificial Intelligence Platform, launched aggressively from 2023 onward — is what makes the investment thesis genuinely interesting.

Together with Foundry and Apollo, AIP forms an operating system that can deliver a full range of AI-driven products: from LLM-powered web applications to mobile applications using vision-language models to edge applications that embed localized AI directly into operational environments.

The critical distinction from every other enterprise AI product: the AI in AIP does not operate on generic data. It operates on the Ontology — the living, governed, real-world representation of the organization. When a military commander asks "which units are available for redeployment right now and what are their fuel levels," the AI is not searching the internet. It is querying a digital twin of the actual battlespace. When a hospital administrator asks "which patients are at risk of sepsis in the next 24 hours," the AI is reasoning over a structured model of every patient, every vital sign, every lab result, every medication — in real time.

This is the quote circulating in investor circles, and it is largely accurate: "The competition thinks the market is data platforms. Palantir thinks the market is AI operating systems. Once you see the ontology layer, it's hard to unsee it. And once an institution runs on it — it's very hard to go back to spreadsheets pretending to be reality."

The "hard to go back" part is not marketing. It is the product's most important feature. The Ontology is not just software. It becomes the organization's institutional memory — every decision, every action, every relationship between entities, encoded and governed. Ripping it out means rebuilding that institutional memory from scratch. The switching cost is not financial. It is operational.

Part III: The Surveillance Question — What the Technology Actually Enables

This section exists because, as the Ultimate Financial Encyclopedia, we cannot write about Palantir without being honest about what the product does to people who are not its clients.

Palantir has drawn sustained criticism for aiding immigration officials in identifying and locating undocumented immigrants. As its footprint has expanded into more government agencies, that criticism has moved from theoretical to operational.

In March 2025, Palantir secured a $30 million contract with ICE to monitor undocumented immigrants — a deal rights advocates warn will expand warrantless surveillance and racial profiling across border communities. More significantly, under the current administration's government efficiency initiative, Palantir is now poised to merge Social Security records, IRS filings, Customs and Border Protection logs, DMV records, and more into a single AI-powered system at the Department of Homeland Security.

This is not a dystopian scenario. It is a procurement contract.

The technology that makes Palantir powerful for a military — fusing fragmented data sources about threats into a single operational picture — works identically when the "threat" being tracked is a political dissident, an undocumented immigrant, or a citizen whose financial transactions pattern-match to a profile someone in government decided was suspicious. The product does not change. Only the target does.

Academic researchers studying the company have noted that Palantir's software is not a mere tool — it shapes institutional culture by embedding new norms about what is worth tracking, what constitutes a pattern, and what triggers action. The Ontology does not just answer questions. It defines which questions get asked, which entities get tracked, which relationships get flagged. The person who builds the ontology decides what "suspicious" means. That person is now, increasingly, a government contractor answering to defense and law enforcement clients.

The BBC series The Capture — which depicts AI-powered systems being used by the state to fabricate criminal evidence — is often described as near-future speculation. It deserves a more precise description: the data infrastructure required for what that show depicts exists today, is commercially available, and Palantir is one of its primary builders. The fiction is in the specific abuse. The technical capability is not fiction at all.

This does not make Palantir evil. It makes it a company whose value proposition and whose threat to civil liberties are identical. The same product. The same feature set. Two different descriptions depending on who is being watched and who is doing the watching.

Part IV: The Valuation — The Most Expensive Software Company on Earth

The business is genuinely performing. Q3 2025 results showed revenue jumping 63% year-over-year to $1.18 billion. U.S. commercial revenue soared 121% to $306 million. The customer base expanded 45% year-over-year. Net dollar retention hit 134% — meaning existing customers are spending 34% more each year on average. The $10 billion U.S. Army Enterprise Agreement further anchors the growth narrative.

That is not the debate. The debate is the price at which you are buying that performance.

PLTR stock trades at over 400 times earnings and more than 250 times free cash flow. The implied growth requirement to justify current prices is 39–45% annual free cash flow growth sustained for a full decade.

Run the DCF logic this encyclopedia always demands.

To justify a market cap of roughly $300 billion on projected 2025 revenue of approximately $4.2 billion, the stock is pricing in a scenario where Palantir grows at 30–40% annually for the next decade, maintains its current margins, and faces no serious competitive erosion. At the end of that decade, it would need to be generating $50–100 billion in annual revenue — placing it among the largest software companies in human history. Not impossible. But the probability distribution around that scenario is doing an enormous amount of work.

DA Davidson's head of technology research called Palantir "the best story in all of software" — and still estimated that the company would have to grow at 50% annually for the next five years and maintain a 50% margin just to get its forward P/E down to 30, in line with a company like Microsoft.

For context: Microsoft's Azure AI segment, which grew 175% year-over-year in Q2 2025, trades at a forward P/E of approximately 37 times. Snowflake's AI cloud services command a P/S of around 18 times. Nvidia — the company that builds the physical infrastructure all AI runs on — trades at approximately 24 times revenue.

Palantir trades at approximately 80–100 times revenue. It is paying for a monopoly that has not yet been established.

Part V: The Competitive Question — Google, Meta, and Who Actually Threatens Palantir

The most common objection to the Palantir bull case goes like this: Google and Meta, after decades of existence, have vastly more data, more computational infrastructure, more AI talent, and more financial resources than Palantir. If the prize is AI operating systems for institutions, why wouldn't the companies with the deepest AI capabilities simply build what Palantir built and take the market?

It is the right question. And the answer is both more reassuring and more conditional than the bulls acknowledge.

On raw AI capability, data volume, and engineering talent — Google and Meta are not behind Palantir. They are a decade ahead. Google's DeepMind is arguably the most advanced AI research organization in the world. Meta's AI infrastructure operates at a scale Palantir cannot match. If the competition were purely about who can build the most powerful general AI system, Palantir would lose without a fight.

But Palantir's moat is not about building better AI. It is about deploying AI into institutions that will not — legally, operationally, or philosophically — give their most sensitive operational data to Google or Meta.

This is the design choice that defines the company. Palantir's model is: bring your AI to the customer's data, inside the customer's environment, under the customer's governance rules. The data never leaves. No training on client data. No monetization of client information. This is the structural opposite of Google's and Meta's business models, which are built on centralizing data to serve advertising clients.

The U.S. Department of Defense cannot put classified battlefield data into Google Cloud. The U.S. Army cannot run its kill-chain decision software on Meta's servers. A major bank cannot hand its entire transaction history to a company whose primary revenue comes from advertising. These are not hypothetical objections. They are legal, regulatory, and operational realities that no amount of Google engineering talent can dissolve.

Google could build Gotham. Google could build Foundry. The reason it has not is not technical incapacity. It is that Google's leadership made a deliberate decision — after the Project Maven controversy in 2018, when thousands of Google employees signed an open letter and several resigned over AI military contracts — to limit its exposure to lethal military AI applications. That decision created the market Palantir occupies. The absence of Google in this space is not a gap waiting to be filled. It is a choice Google keeps making every year.

Meta is a separate but instructive case. When DeepMind was being acquired, its founder Demis Hassabis reportedly declined to sell to Meta specifically because of concerns about how Meta intended to use the technology for military applications — one factor that made a sale to Google preferable. Whether every detail of that account is accurate, it illustrates the landscape clearly: the AI companies with the deepest general capabilities have made choices about which applications to pursue, and those choices left a specific gap that Palantir strategically occupies.

So the real question is not whether Google is more technically capable. It is: will Google stay out of this market long enough for Palantir to become irreplaceable?

The honest answer is that Google's position is not permanently fixed. Commercial pressure, geopolitical competition with China, and shifting employee demographics could all move Google back toward defense AI contracts. If that happens, Palantir's commercial moat — outside of government — becomes genuinely vulnerable.

The real competitive threat to Palantir is not Google or Meta. It is Microsoft. Azure already holds defense clearances. Microsoft already has the enterprise sales relationships that took Palantir years to build. Copilot is being deployed in defense environments today. If Microsoft builds an ontology layer with equivalent governance and security capabilities — and it is actively trying to do exactly that — it arrives with distribution advantages that Palantir has never had. This is the competition that keeps Palantir's management awake. Not DeepMind. Not LLaMA. Microsoft Teams already runs on the desktop of every government contractor in America.

Part VI: The Intelligent Investor's Action Plan

Position 1: Understand what you are actually buying before touching this stock

Palantir is not a standard enterprise software company. It is a defense-intelligence-commercial hybrid whose business model depends on the continuation and expansion of state surveillance infrastructure. When you buy PLTR, you are making a bet that:

Government AI spending continues to expand without political interruption

Palantir's ontology moat holds against Microsoft and AWS GovCloud

Civil liberties blowback never reaches the scale that materially disrupts contracts

Revenue grows at 30–40% annually for a full decade

If discomfort with the first condition — the surveillance infrastructure dependency — is a material concern, that is a legitimate reason not to own the stock. This encyclopedia respects that position without judgment. It equally respects the position that Palantir's technology deployed by democracies is preferable to the alternative being built in Beijing. Both are honest positions. Neither is naive.

Position 2: The valuation is not a buying signal — it is a flashing warning

Run the DCF honestly. At a $300 billion market cap on $4.2 billion of revenue, the market is pricing in flawless execution for a decade at growth rates very few software companies in history have sustained.

Buffett's first rule: never lose money. His second rule: never forget the first rule.

A forward P/E above 400 times means you are paying for centuries of current earnings today. There is no margin of safety at this price by any conventional DCF methodology. Even the most bullish analyst coverage concludes the math only works if 50% annual growth is sustained for five more years. That is not a margin of safety. That is a prayer.

Munger's principle applies directly: invert. What has to go wrong for this investment to fail? Microsoft closes the government moat. A major contract is terminated for political or ethical reasons. Growth decelerates to 20%. A civil liberties scandal triggers congressional scrutiny of the DHS database project. Or simply — the market reprices to a reasonable multiple when rates rise or sentiment shifts. Any one of these is plausible. Several together would be catastrophic for shareholders who paid today's prices.

The business and the stock are different things. Palantir the business may be genuinely extraordinary. Palantir the stock at 400x earnings requires extraordinary things to keep happening indefinitely.

Position 3: The moat is real — but it is not unassailable

The ontology moat is genuine. A 134% net dollar retention rate is the clearest evidence available that customers are not just staying — they are deepening the dependency. Once an institution's decision logic, data model, and institutional memory are encoded inside Palantir's framework, rebuilding it elsewhere is not a software migration. It is an institutional reconstruction.

But moats erode when attackers are willing to absorb the cost. Microsoft's Azure Arc, its defense cloud infrastructure, and its Copilot rollout represent a slow-motion assault on exactly this moat. The most likely decade-long scenario: Palantir captures and holds the government and defense segment — where its relationships, clearances, and mission-specific track record are genuinely hard to replicate — while facing serious competitive pressure in commercial markets where switching costs are lower and Microsoft's distribution advantage is overwhelming.

Position 4: What to actually watch

Bull signals:

U.S. commercial revenue growth sustained above 50% annually

Net dollar retention staying above 120% — this confirms the moat is deepening, not just holding

New government enterprise agreements at the scale of the $10 billion Army deal

AIP adoption rate — how many existing Foundry customers are layering AIP on top

Bear signals:

Any Microsoft Azure defense contract that directly overlaps Palantir's core government market

Congressional or judicial scrutiny of the DHS master database project — any legislative pause could freeze contract expansion

U.S. commercial revenue growth decelerating below 40%

Stock-based compensation as a percentage of revenue — Palantir has historically been aggressive in diluting shareholders through equity compensation; watch for improvement or deterioration

Valuation trigger: This encyclopedia's standard is 30% below fair value with a margin of safety. By any conventional DCF at a 10% discount rate with optimistic growth assumptions, a defensible fair value range for Palantir sits somewhere between $40 and $80 billion — roughly 75–85% below current market capitalization. That gap would need to close substantially before this becomes a buy by the standards applied consistently across this encyclopedia.

Position 5: The working-class lens — who is this company actually for?

Buffett has spent decades articulating the same framework: own businesses that work for you, not against you. Coca-Cola sells you something you choose to buy. Visa charges a small fee for a service you benefit from. The value exchange is clear, consensual, and mutual.

Palantir is structurally different. Its primary customers are governments and large institutions. Its products serve those customers. The working-class individual is not a customer. In many of Palantir's most important applications, you are the subject. The data being fused, the patterns being detected, the decisions being automated — they are about people, not for them.

This does not make it uninvestable. It makes it a company where the investor's interest and the citizen's interest can point in directly opposite directions. Owning PLTR at the right price could make you money. The product that made you money may simultaneously be used to track your financial transactions, monitor your movements, or flag your communications for review by an algorithm you will never see, never challenge, and never know exists.

That tension is real. Name it before you decide. That is what an honest financial encyclopedia does.

Conclusion: The Seeing Stone Cuts Both Ways

Palantir built something genuinely novel. The Ontology layer is not marketing — it is a real architectural advance in how institutions make decisions at scale. AIP on top of that architecture gives it a defensible position in the AI era that Google and Meta, for structural and cultural reasons, have not yet chosen to compete with directly. The $10 billion Army contract, the 134% net dollar retention, the 121% U.S. commercial revenue growth — these are not manufactured numbers. The business is real.

The valuation at 400 times earnings is not real by any standard that Buffett, Munger, or this encyclopedia applies to investment decisions. It is a bet on perfection sustained for a decade. That is not investing. It is speculation — high-quality, well-reasoned speculation in a genuinely exceptional company, but speculation nonetheless.

The civil liberties dimension is not separate from the investment thesis. It is the investment thesis. The reason Palantir has no serious competitor in government AI is that building AI for lethal and surveillance applications is a choice the most technically capable companies in the world have partially declined to make. That choice is Palantir's market. And that market's continued existence depends on the continued willingness of democratic governments to expand surveillance infrastructure — which depends, in turn, on citizens either not knowing or not caring.

The seeing stone shows you everything. It just doesn't tell you whether what you're seeing is a victory or a warning.